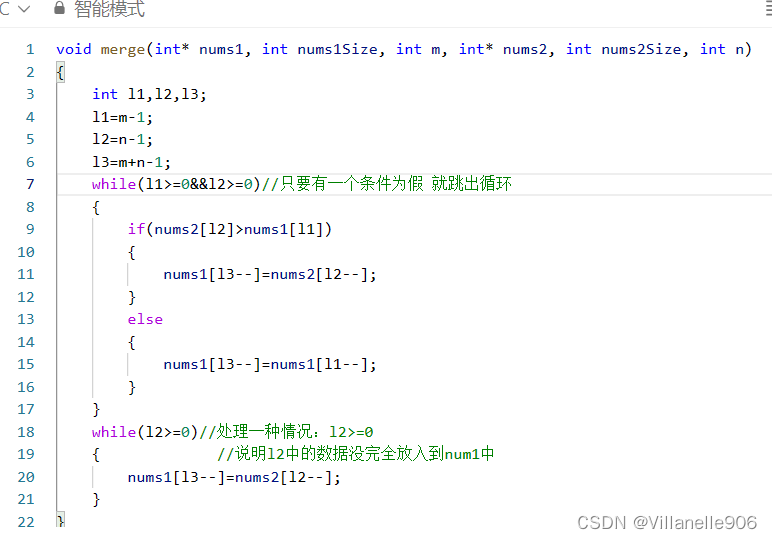

一、定义残差结构

BasicBlock

18层、34层网络对应的残差结构

浅层网络主线由两个3x3的卷积层链接,相加后通过relu激活函数输出。还有一个shortcut捷径

参数解释

expansion = 1 : 判断对应主分支的残差结构有无变化

downsample=None : 下采样参数,默认为none

stride步距为1,对应实线残差结构 ; 步距为2,对应虚线残差结构

self.conv2 = nn.Conv2d(in_channels=out_channel :卷积层1的输出即为输入

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, in_channel, out_channel, stride=1, downsample=None, **kwargs):

super(BasicBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels=in_channel, out_channels=out_channel,

kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channel)

self.relu = nn.ReLU()

self.conv2 = nn.Conv2d(in_channels=out_channel, out_channels=out_channel,

kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channel)

self.downsample = downsample

定义正向传播

identity = x : shortcut捷径上的输出值

identity = self.downsample(x) : 将输出特征矩阵x输入到下采样函数中得到捷径分支的输出

def forward(self, x): #定义正向传播

identity = x

if self.downsample is not None:

identity = self.downsample(x)

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out += identity # 相加后通过relu激活函数输出

out = self.relu(out)

return outBottleBlock

50层、101层、152层神经网路对应的残差结构

深层网络主线由一个1x1的降维卷积层,3x3卷积层、1x1升维卷积层和一个shortcut捷径组成。

按照残差结构进行定义,大致与BasicBlock参数一样,不同的是expansion=4,卷积核个数是之前的4倍。

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, in_channel, out_channel, stride=1, downsample=None,

groups=1, width_per_group=64):

super(Bottleneck, self).__init__()

width = int(out_channel * (width_per_group / 64.)) * groups

self.conv1 = nn.Conv2d(in_channels=in_channel, out_channels=width,

kernel_size=1, stride=1, bias=False) # squeeze channels

self.bn1 = nn.BatchNorm2d(width)

# -----------------------------------------

self.conv2 = nn.Conv2d(in_channels=width, out_channels=width, groups=groups,

kernel_size=3, stride=stride, bias=False, padding=1)

self.bn2 = nn.BatchNorm2d(width)

# -----------------------------------------

self.conv3 = nn.Conv2d(in_channels=width, out_channels=out_channel*self.expansion,

kernel_size=1, stride=1, bias=False) # unsqueeze channels

self.bn3 = nn.BatchNorm2d(out_channel*self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample定义正向传播

if self.downsample is not None : is None是实线 is not None 是虚线

def forward(self, x):

identity = x

if self.downsample is not None:

identity = self.downsample(x)

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

out += identity

out = self.relu(out)

return out二、 定义网络结构

block, 根据定义不同的层结构传入不同的block

blocks_num, 所使用残差结构的数目、参数列表

num_classes=1000, 分类个数

include_top=True, 在ResNet基础上搭建其他的网络

self.layer1 对应conv2_x

self.layer2 对应conv3_x

self.layer3 对应conv4_x

self.layer4 对应conv5_x 这一系列的残差结构都通过_make_layer函数实线

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) # output size = (1, 1) 不管输入是多杀,通过自适应平均池化下采样都会输出(1,1)

self.fc = nn.Linear(512 * block.expansion, num_classes) 通过全连接输出节点层,输入的节点个数是通过平均池化下采样层后的特征矩阵展平后所得到的节点个数,但是由于节点的高和宽都是1,所以节点的个数=深度

class ResNet(nn.Module):

def __init__(self,

block,

blocks_num,

num_classes=1000,

include_top=True,

groups=1,

width_per_group=64):

super(ResNet, self).__init__()

self.include_top = include_top # 将参数参入变为类变量

self.in_channel = 64 # 表格中通过maxpooling后得到的深度

self.groups = groups

self.width_per_group = width_per_group

self.conv1 = nn.Conv2d(3, self.in_channel, kernel_size=7, stride=2,

padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(self.in_channel)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, blocks_num[0])

self.layer2 = self._make_layer(block, 128, blocks_num[1], stride=2)

self.layer3 = self._make_layer(block, 256, blocks_num[2], stride=2)

self.layer4 = self._make_layer(block, 512, blocks_num[3], stride=2)

if self.include_top:

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) # output size = (1, 1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')三、定义_make_layer函数

block, 定义的残差结构

channel, 残差结构中卷积层使用卷积核的个数

block_num, 该层包含了几个残差结构

block(传入第一层残差结构

self.in_channel,

channel, 主分支第一个卷积层卷积核的个数

downsample=downsample, 下采样函数

for _ in range(1, block_num): 通过循环,将剩下一系列的实线残差结构压入进去

return nn.Sequential(*layers) 非关键字传入,将定义的一系列层结构组合并返回。

def _make_layer(self, block, channel, block_num, stride=1):

downsample = None

if stride != 1 or self.in_channel != channel * block.expansion: # 18、34层会跳过这一部分 ;50、101、152层不会

downsample = nn.Sequential( # 生成下采样函数

nn.Conv2d(self.in_channel, channel * block.expansion, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(channel * block.expansion))

layers = [] # 定义空的列表

layers.append(block(self.in_channel,

channel,

downsample=downsample,

stride=stride,

groups=self.groups,

width_per_group=self.width_per_group))

self.in_channel = channel * block.expansion

for _ in range(1, block_num):

layers.append(block(self.in_channel,

channel,

groups=self.groups,

width_per_group=self.width_per_group))

return nn.Sequential(*layers)

定义正向传播

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

if self.include_top:

x = self.avgpool(x)

x = torch.flatten(x, 1) # 展平后链接

x = self.fc(x)

return x四、建立不同的层结构

传入的参数分别按照定义的顺序传入。

def resnet34(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnet34-333f7ec4.pth

return ResNet(BasicBlock, [3, 4, 6, 3], num_classes=num_classes, include_top=include_top)

def resnet50(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnet50-19c8e357.pth

return ResNet(Bottleneck, [3, 4, 6, 3], num_classes=num_classes, include_top=include_top)

def resnet101(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnet101-5d3b4d8f.pth

return ResNet(Bottleneck, [3, 4, 23, 3], num_classes=num_classes, include_top=include_top)后续继续补充。。。

](https://img-blog.csdnimg.cn/direct/d679cbdd46324a4abde9c5fa6c6834ba.png)